Listen to this post: Audio Overview

The One-Hour Company: How AI Is Collapsing the Startup Timeline

The AI startup timeline has compressed from months to hours. Engineers and operators in pharma, biotech, and medical device manufacturing who are still running multi-week feasibility cycles before testing a new internal tool or workflow are already behind. Agentic AI tools now make it possible to build, deploy, and validate a functional product in a single working session, and the implications for regulated industries are significant.

AI startup timeline compression is the measurable reduction in time required to move from concept to a functioning, revenue-generating or value-generating product, driven by agentic coding tools, automated infrastructure, and AI-driven execution layers. In life sciences and GMP environments, this matters because validation timelines, change control cycles, and resource allocation decisions are all calibrated around assumptions about how long new systems take to build and test.

FREE GUIDE

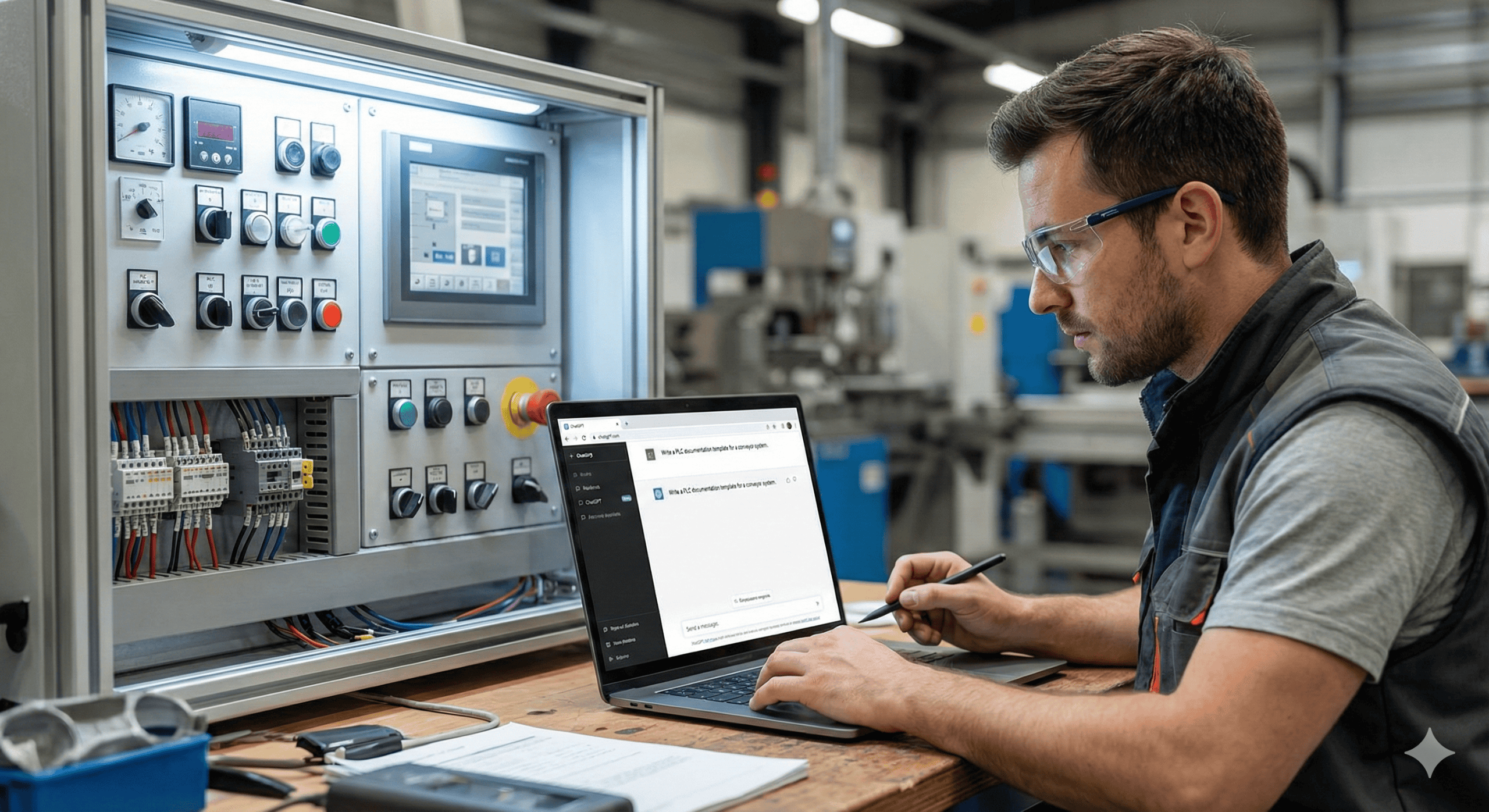

Stop Writing Design Specs by Hand

Get the free visual guide: how AI tools generate GAMP 5 documentation directly from your PLC and DCS exports. Used by Life Sciences engineers who are done doing it manually.

No spam. Unsubscribe anytime.

Greg Isenberg’s recent breakdown of 23 AI trends cutting through the noise right now is worth your attention, not because it predicts a distant future, but because it describes what early adopters are already doing. Three concepts stand out as immediately actionable for engineers and quality managers who design, qualify, and operate automated systems.

How Agentic Coding Tools Are Shrinking the MVP Build Cycle to Under One Hour

Agentic coding tools like Claude Code and OpenAI Codex have fundamentally changed the cost of experimentation. Where launching even a minimal viable product once required weeks of developer time, scoped budgets, and back-and-forth with a freelancer or internal IT queue, the new stack compresses that entire process into a single focused session.

The practical implication is not just speed. It is the lowered psychological and organizational barrier to testing an idea. When the cost of being wrong drops to a few hours of focused work, you can run more experiments, kill bad ideas faster, and double down on what gets traction. In a GMP context, this changes how teams should think about building internal tooling: lightweight data collection interfaces, batch record automation prototypes, deviation tracking dashboards. These no longer require a full IT project charter to test at a proof-of-concept level.

The shift is from planning-heavy execution to rapid iteration with controlled scope. If your current process for testing a new internal offering takes months, that timeline is a competitive and operational disadvantage.

Ambient Business Architecture: AI Agent-Driven Operations With Minimal Human Input

The second concept Isenberg surfaces is the ambient business. These are AI agent-driven operations that handle market monitoring, customer communications, content distribution, and routine decision-making with minimal daily human input. The operator sets strategic direction and monitors outcomes. Day-to-day execution runs largely on its own.

This is not speculative. Businesses are already deploying combinations of AI agents to handle customer service queues, generate and schedule content, monitor competitor pricing, and route leads through sales sequences without a human touching each step. The result is a lean operational model that scales without a proportional increase in headcount.

For engineers and quality managers, the ambient business concept is worth internalizing as a system design principle. The question to ask about any workflow is: what is the minimum human input required to maintain quality and strategic integrity here, and can AI handle the rest? In a regulated environment, that question does not eliminate human oversight, but it does sharpen where qualified human review is actually required versus where it has simply accumulated by default. Applied consistently, that question reshapes how validation resources and operator attention are allocated.

The Agent Economy: How AI-to-AI Task Orchestration Is Reshaping Workflow Design

The third trend is the most forward-looking, but its infrastructure is being built now. Isenberg argues that 2025 through 2030 marks the era of the agent economy, where AI agents do not just execute tasks assigned by humans but actively discover, evaluate, and contract other specialized agents to complete work on their behalf.

Consider a top-level agent managing a regulatory submission workflow. It might autonomously assign a specialized agent for document gap analysis, another for formatting and cross-referencing, and a third for version control and audit trail logging. No human makes those subcontracting decisions at the task level. The opportunity this creates is not only in building agents but in building the infrastructure around them: verification layers, audit-ready logging, role-based access controls, and coordination protocols that satisfy 21 CFR Part 11 and Annex 11 requirements in agent-to-agent transaction environments.

Early movers in legal tech, financial services, and logistics are already exploring what agent orchestration looks like at the workflow level. Life sciences is not exempt from this trajectory. The engineers who understand how to design, validate, and oversee these systems will carry a significant advantage as the tooling matures and regulatory guidance catches up.

Why Distribution and Audience Remain the Human Competitive Moat in an AI-Accelerated Market

The throughline across all three concepts is consistent: distribution and trusted relationships remain the human moat, while AI increasingly owns execution. The one-hour company only works if you have somewhere to send it. Operators who have built trust, domain credibility, or an established audience before deploying these tools will move dramatically faster than those starting from scratch. The technology is available to everyone. The relationship with an audience, a customer base, or a qualified reviewer network is not.

That framing is a useful corrective to the hype. The tools lower execution costs across the board, but they do not manufacture attention or trust. In regulated industries specifically, they do not replace subject matter expertise, qualified person sign-off, or institutional knowledge of a process. Those still require sustained human investment.

How Engineers in Pharma and Biotech Should Start Running AI Workflow Experiments Now

Pick one workflow in your operation that currently takes days and ask whether an agentic tool could compress it to hours. Pick one repetitive process and map out what an ambient, agent-driven version of it would look like, including where human review checkpoints must be preserved for compliance reasons and where they exist only because no one has questioned them.

You do not need to rebuild your validation framework this quarter. But you do need to start running controlled experiments now, because the teams and vendors you compete with for talent, efficiency, and regulatory approval timelines almost certainly already are.

Frequently Asked Questions: AI Startup Timeline and Agentic Automation in Life Sciences

How fast can an AI tool actually build a functional prototype for an internal GMP workflow?

With current agentic coding tools like Claude Code or OpenAI Codex, a functional prototype for a narrow, well-defined workflow, such as a batch record data entry interface or a deviation log tracker, can be generated in one to three hours. That prototype is not validated or production-ready, but it is functional enough to evaluate feasibility, demonstrate to stakeholders, and scope a formal validation effort. The time savings are in the ideation-to-prototype gap, not in the qualification work that follows.

Can AI agents be used in GMP environments without violating 21 CFR Part 11 or Annex 11?

Yes, but the compliance burden does not disappear. AI agents that create, modify, or transmit electronic records in a regulated environment must operate within systems that meet audit trail, access control, and data integrity requirements under 21 CFR Part 11 and Annex 11. The design of an agent-driven workflow needs to define where records are generated, who or what has modification access, and how the system logs agent actions in a retrievable, attributable format. This is solvable, but it requires deliberate architecture decisions from the start, not retrofitting compliance onto a tool built without those constraints.

What is the difference between an AI automation tool and an AI agent in a manufacturing context?

An AI automation tool executes a defined, static task when triggered, similar to a rule-based script with added intelligence. An AI agent is goal-directed: it receives an objective, determines the steps required to achieve it, executes those steps, evaluates results, and adjusts its approach based on feedback, sometimes by invoking other tools or agents. In a manufacturing context, an automation tool might flag out-of-spec values in a data feed. An agent might detect the flag, pull relevant batch history, cross-reference the SOP, draft a deviation report, and route it to the appropriate owner, all without human initiation of each step.

How should a quality manager evaluate the risk of deploying an ambient AI system in a regulated workflow?

Risk evaluation should follow the same logic as any computerized system validation under GAMP 5 categories, with particular attention to where the system makes decisions versus where it only executes predefined logic. The higher the degree of autonomous decision-making, the more critical it becomes to define the boundaries of agent authority, establish human review triggers, and document the basis for trusting the system’s outputs. An ambient AI system routing non-critical communications carries a different risk profile than one generating or approving quality records. Scope the autonomy to match the risk category of the decisions involved.

Is the one-hour company concept relevant to medical device startups operating under FDA design controls?

It is relevant, with caveats. The one-hour company concept applies most directly to the pre-submission, concept validation phase, where a team needs to test whether a device feature, workflow, or market assumption has merit before committing to a formal design control process. AI tools can compress that exploratory phase significantly. Once a device enters formal development under 21 CFR Part 820 or ISO 13485, the design control, verification, and validation requirements do not compress in the same way. The value of the accelerated AI startup timeline in medical device development is primarily in reducing wasted investment before the regulatory clock starts, not in shortcutting the regulated process itself.

Get the visual guide for this post.

Subscribe to Life Sciences, Automated and get the slide deck delivered to your inbox — plus every future issue.

Get the visual guide for this post: Get the visual guide