Listen to this post: Audio Overview

Claude Code Has a Built-In Coach. Most Users Never Find It.

This Claude Code tutorial for beginners covers two built-in slash commands, /powerup and /insights, that most users never discover. These commands function as a structured onboarding system and a personalized performance feedback loop, respectively. For engineers and quality managers in regulated industries evaluating AI coding tools for team deployment, understanding these features changes how you approach both adoption and ongoing training.

Here is the situation most teams find themselves in: they adopt a new AI tool, develop habits in the first few weeks, and those habits calcify. Months later, they are using 30 percent of what the tool can do and wondering why the productivity gains never fully materialized. The commands described below exist specifically to break that pattern.

FREE GUIDE

Stop Writing Design Specs by Hand

Get the free visual guide: how AI tools generate GAMP 5 documentation directly from your PLC and DCS exports. Used by Life Sciences engineers who are done doing it manually.

No spam. Unsubscribe anytime.

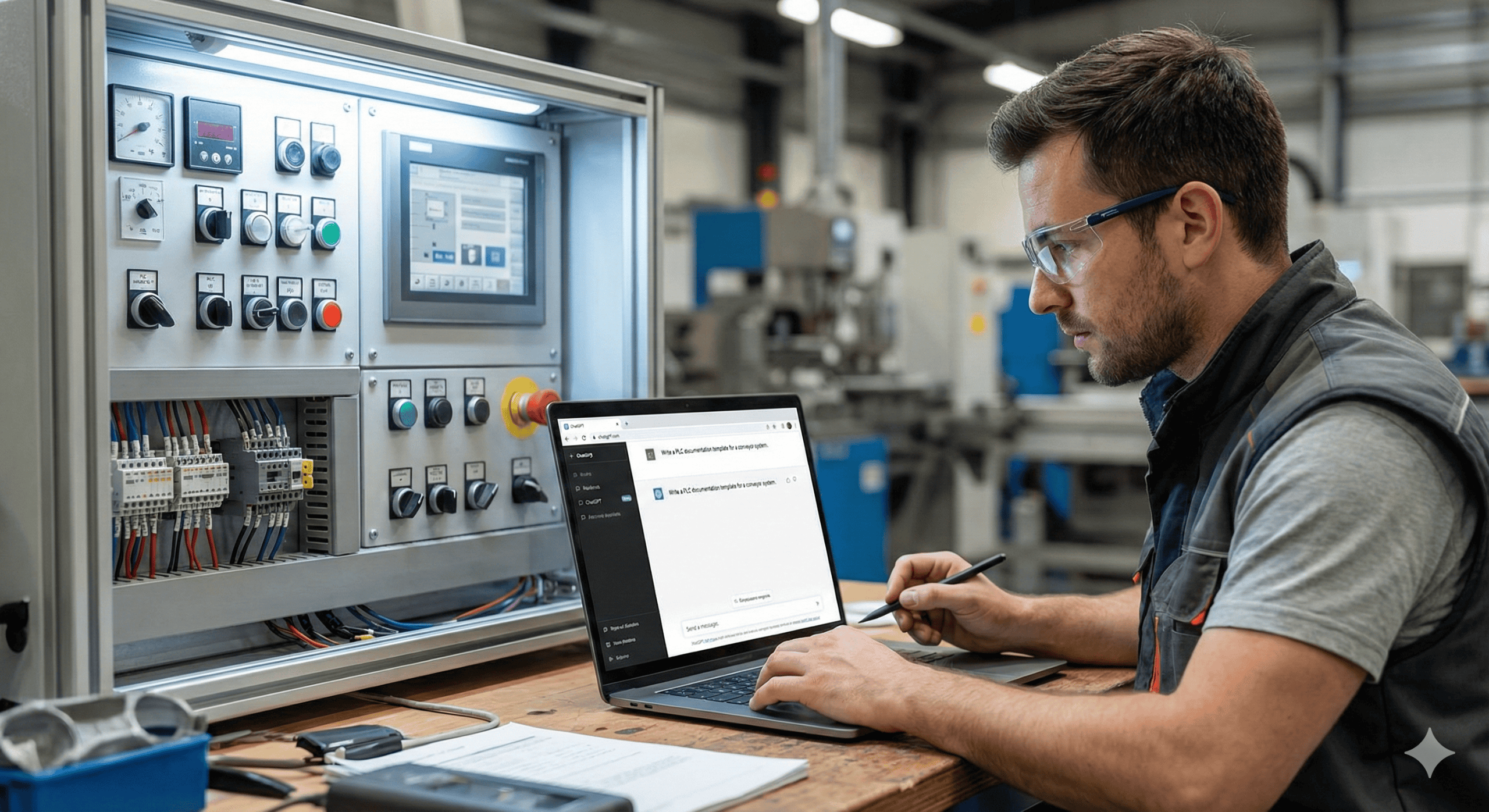

Claude Code slash commands are text-based directives entered directly into the Claude Code interface that trigger specific platform functions, from guided tutorials to session analytics. In life sciences and GMP-regulated environments, where tool validation and consistent usage patterns matter, built-in coaching commands can accelerate compliant adoption across cross-functional teams without relying entirely on external training resources.

How the /powerup Command Works as Structured Onboarding for Claude Code

The /powerup command is not a one-time setup wizard. It is structured onboarding you can access at any point in your usage history, not just during initial installation. It walks users through core Claude Code concepts in a progressive format, building knowledge systematically rather than leaving you to stumble across features through trial and error.

For teams rolling out AI coding tools to engineers who are not deep in developer documentation, or to quality professionals who need functional proficiency without a steep technical ramp, this matters. A guided, repeatable onboarding sequence reduces the variance in how team members actually use the tool. That variance is where inconsistent outputs and compliance risks tend to originate.

If you have been using Claude Code for months and have never run /powerup, run it anyway. The command surfaces concepts and workflows that most users skip during initial setup and never return to.

What the /insights Command Generates from Your Claude Code Session Data

Claude Code logs your session activity as JSONL files over a rolling 30-day window. The /insights command analyzes that data and generates a personalized HTML report card. The report covers your productivity patterns, which tools you rely on most, where your workflows break down, and specific recommendations tailored to how you actually work, not how the average user works.

The tool watches how you use it, then tells you how to use it better. That is not a tutorial. That is a feedback loop.

For a quality manager overseeing AI tool deployment across a team, the /insights output is not just useful for individual improvement. It is a data source. You can use it to identify where team members are underutilizing core features, where workflows are breaking at predictable points, and where targeted retraining would close the most significant gaps.

Why Embedded AI Coaching Signals a Shift in How Adoption Should Be Managed

The /insights feature is interesting on its own, but the broader implication is what should catch your attention if you are responsible for AI adoption at any scale in a regulated environment.

We are entering a phase where AI tools are beginning to close the loop between behavior and guidance. Instead of offering static documentation or generic best practices, these tools can now observe actual usage, identify gaps and inefficiencies, and surface recommendations that are specific to the individual or team using them. The coaching is embedded in the product itself.

This pattern will spread. Expect to see it in AI writing assistants, document management platforms, CAPA workflow tools, and any other system where usage data can be meaningfully analyzed. The question for engineering leads and quality managers is not whether this capability will arrive in the tools your organization uses. It is whether your team is positioned to take advantage of it when it does, and whether your current onboarding and continuous improvement processes are built to accommodate it.

A Senior Automation Engineer’s Assessment of What These Features Signal for Life Sciences Teams

What makes /insights compelling is not the feature itself but what it signals. AI tool vendors are starting to treat usage data as a coaching asset rather than just an analytics metric. For anyone managing AI adoption across a team or an organization in pharma, biotech, or medical device manufacturing, that shift changes how you should think about onboarding, training, and continuous improvement. The tool is not just something you learn once. It becomes something that learns alongside you. In a GMP context, that has direct implications for how you write training procedures and how you define ongoing competency requirements for AI-assisted workflows.

How to Evaluate Any AI Coding Tool for Team-Wide Productivity Improvement

If you are already using Claude Code, run /powerup in your next session even if you have been on the platform for months. Then let it run for a few more weeks and pull your /insights report. Treat the output like a performance review from a colleague who has been observing your workflow without judgment and with useful data behind every recommendation.

If you are evaluating AI tools for team deployment and Claude Code is on your list, use this as part of your evaluation framework. Ask vendors not just what their tool can do, but how it helps users improve over time. Does it track usage patterns? Does it surface personalized recommendations? Does it adapt to how your team actually works, or does it stay static after initial deployment?

The AI tools that will deliver lasting productivity gains in regulated environments are not the ones with the longest feature lists. They are the ones that help users continuously close the gap between how they currently work and how they could work. That gap is where the real ROI lives, and embedded coaching features like /powerup and /insights are the first generation of tools built to help you find it systematically.

Frequently Asked Questions: Claude Code for Engineers in Regulated Industries

What does the Claude Code /insights command actually show you?

The /insights command analyzes your JSONL session logs from the past 30 days and generates a personalized HTML report that covers your productivity patterns, most-used tools, workflow breakdown points, and specific improvement recommendations based on your actual usage data. It does not show you generic tips. It shows you recommendations calibrated to how you specifically work.

Is Claude Code appropriate for non-developers in pharma and biotech quality roles?

Yes, with the right onboarding approach. The /powerup command exists specifically to give non-developers a structured, progressive introduction to Claude Code concepts without requiring them to read through technical documentation. Quality managers and validation engineers who need functional proficiency rather than deep coding expertise can use /powerup to build a working knowledge base on their own timeline.

How does Claude Code store session data and is it safe for use in GMP environments?

Claude Code logs session activity as local JSONL files over a rolling 30-day window. The /insights command reads from those local logs to generate its report. As with any AI tool used in a GMP-regulated context, your organization should conduct a formal risk assessment, review Anthropic’s data handling documentation, and determine whether Claude Code falls within scope of your computer system validation requirements before deploying it in workflows that touch regulated data or processes.

How is /powerup different from just reading the Claude Code documentation?

Documentation is static and self-directed. The /powerup command delivers a structured, progressive sequence inside the tool itself, which means users are learning in the context where they will actually apply the knowledge. For teams where training consistency matters, such as in regulated manufacturing environments, an in-product guided sequence also produces more repeatable results than asking each team member to self-navigate external documentation.

Can the /insights report be used to support AI tool qualification or training documentation in a validated environment?

The /insights HTML report is not a formal validation artifact, but it does provide timestamped, usage-based data that could inform competency assessments or retraining decisions in a quality management framework. If your organization requires documented evidence of ongoing proficiency with AI tools used in regulated workflows, the /insights output could serve as a supporting input to that process, though it should not be treated as a substitute for formal training records.

Get the visual guide for this post.

Subscribe to Life Sciences, Automated and get the slide deck delivered to your inbox — plus every future issue.

Get the visual guide for this post: Get the visual guide